ORA-01795 and JPA function workaround

27 November 2020

Few posts ago i wrote about Hibernate Interceptor to solve ORA-01795: maximum number of expressions in a list is 1000 error. This way can be very helpful in case you got this limitation, but by some reasons not able to perform refactoring.

Another way to get it done with JPQL is a JPA function(). We used similar approach to implement JPA paging with COUNT(*) OVER().

So, let's see how less code we need to get it work with custom Dialect workaround.

Custom dialect:

public class MyOraDialect extends Oracle10gDialect {

public MyOraDialect () {

super();

// sql tuples workaround

registerFunction( "safeTupleIn", new VarArgsSQLFunction( StandardBasicTypes.INTEGER, "(", ",0),(", ",0)"));

// custom SQLFunction workaround

registerFunction( "safeIn", new SafeInFunction());

}

}

Usage example:

@Test

public void safeTupleIn1000Test() throws Exception {

EntityManager em = Persistence.createEntityManagerFactory("myDSTestOra").createEntityManager();

// this.generateTestData(em);

...

Query query = em.createQuery("SELECT b as post FROM OraBlogEntity b where (id, 0) in (function('safeTupleIn',:ids))", Tuple.class);

query.setParameter("ids", idsList);

List<Tuple> tpList = query.getResultList();

System.out.println(tpList.size());

}

or bit cleaner:

...

Query query = em.createQuery("SELECT b as post FROM OraBlogEntity b where id in (function('safeIn', id, :ids))", Tuple.class);

query.setParameter("ids", idsList);

...

Result SQL in this case SQL tuples will looks like

Hibernate: select orablogent0_.id as id1_0_, orablogent0_.body as body2_0_, orablogent0_.title as title3_0_ from orablogentity orablogent0_ where (orablogent0_.id , 0) in ((?,0),(?,0),(?....)

In case custom SQLFunction implementation:

Hibernate: select orablogent0_.id as id1_0_, orablogent0_.body as body2_0_, orablogent0_.title as title3_0_ from orablogentity orablogent0_ where orablogent0_.id in (?,...,?) or orablogent0_.id in (?,...,?)

Below is simple example how custom org.hibernate.dialect.function.SQLFunction can be implemented.

public class SafeInFunction implements SQLFunction {

private final static int IN_CAUSE_LIMIT = 1000;

...

@Override

public String render(Type firstArgumentType, List arguments, SessionFactoryImplementor factory) throws QueryException {

final StringBuilder buf = new StringBuilder();

String fieldName = (String) arguments.get(0);

for (int i = 1; i < arguments.size(); i++) {

if (i % IN_CAUSE_LIMIT == 0) {

buf.deleteCharAt(buf.length() - 1).append(") or ").append(fieldName).append(" in (");

}

buf.append("?,");

}

return buf.deleteCharAt(buf.length() - 1).toString();

}

}

PS. Hibernate provides org.hibernate.dialect.Dialect method to overwrite

/**

* Return the limit that the underlying database places on the number of elements in an {@code IN} predicate.

* If the database defines no such limits, simply return zero or less-than-zero.

*

* @return int The limit, or zero-or-less to indicate no limit.

*/

public int getInExpressionCountLimit() {

return 0;

}

But unfortunately did not provides properly implementation yet and just throw warning

WARN: HHH000443: Dialect limits the number of elements in an IN predicate to 1000 entries. However, the given parameter list [ids] contained 1111 entries, which will likely cause failures to execute the query in the database

Source code of described example available on GitHub

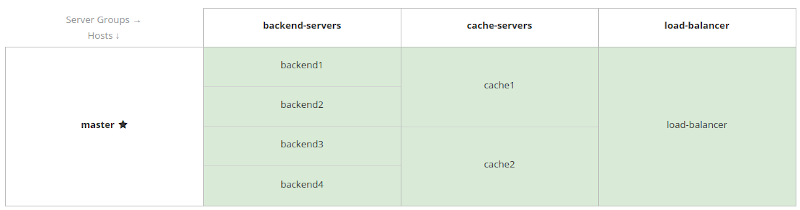

Wildfly/Infinispan domain. Keep distributed cache on separate nodes

03 November 2020

Previously i wrote about development of Jakarta EE application using distributed cache with Wildfly and Infinispan. This solution has a good fit for a small clustered environments where data distribution between nodes will not costs too much. In case you are looking for clustered environment where application scaling should have minimum impact on cache and vice versa, but by some reason you wouldn't like to use a separate infinispan-server cluster as remote-cache-container then next topology can be a solution:

To make it work we need to configure distributed-cache for two server groups and provide zero capacity-factor for one of them. Below is simple configuration example:

# clone current profile

/profile=full-ha:clone(to-profile=full-ha-cache)

# Create cache server group based on new profile

/server-group=cache-servers:add(profile=full-ha-cache, socket-binding-group=full-ha-sockets)

# Add cache container and distributed cache for both profiles

/profile=full-ha/subsystem=infinispan/cache-container=mycachecontainer:add(statistics-enabled=true)

/profile=full-ha-cache/subsystem=infinispan/cache-container=mycachecontainer:add(statistics-enabled=true)

/profile=ful-ha-cache/subsystem=infinispan/cache-container=mycachecontainer/distributed-cache=mycache:add()

/profile=full-ha/subsystem=infinispan/cache-container=mycachecontainer/distributed-cache=mycache:add()

# Create cache servers

/host=master/server-config=cache1:add(group=cache-servers,socket-binding-port-offset=500)

/host=master/server-config=cache1:start(blocking=true)

/host=master/server-config=cache2:add(group=cache-servers,socket-binding-port-offset=600)

/host=master/server-config=cache2:start(blocking=true)

# Configure ZERO capacity for profile which we will use for application

/profile=full-ha/subsystem=infinispan/cache-container=mycachecontainer/distributed-cache=mycache:write-attribute(name=capacity-factor, value=0)

# Provide transport

/profile=full-ha/subsystem=infinispan/cache-container=mycachecontainer/transport=jgroups:add()

/profile=ful-ha-cache/subsystem=infinispan/cache-container=mycachecontainer/transport=jgroups:add()

Now let's deploy our application on two server groups

deploy jcache-examples.war --server-groups=backend-servers,cache-servers

You can check cached number-of-entries on each server by

/host=master/server=backend1/subsystem=infinispan/cache-container=mycachecontainer/distributed-cache=mycache:read-resource(include-runtime=true)

/host=master/server=cache1/subsystem=infinispan/cache-container=mycachecontainer/distributed-cache=mycache:read-resource(include-runtime=true)

And be sure that application backend-servers will always show "number-of-entries" => 0

JPA and "ORA-01795: maximum number of expressions in a list is 1000 error" workaround

13 October 2020

Oracle RDBMS has a known 1000 elements limitation on count of parameters for the IN clause.

SELECT * FROM TABLE WHERE ID IN (?,?,?.....?)

In case count of parameters more than 1000, - Oracle throws error: ORA-01795: maximum number of expressions in a list is 1000 error

Exists few ways to deal with it from the SQL point of view:

- Split clause

field IN (n1,...n9999)to portions like(field IN (n1...n999) or field IN (n1000...n1999)...) - Use temporary table to insert IDs and use sub select like

field IN (select id from TMP_IDS) - Use tuples like

where (id, 0) IN ((1, 0)...(n, 0))

Some data mapping frameworks provides solution for above by default, but unfortunately, Hibernate (most popular JPA provider) does not yet.

Fortunately, we can provide custom solution here by overriding EmptyInterceptor.onPrepareStatement(String sql) which is called when SQL string is being prepared and perform some String manipulation with the SQL.

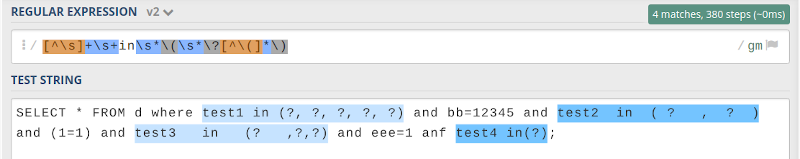

First, we need for regular expression to find IN clause matches in the query. regex101.com provides great help here

Now we are ready to implement interceptor:

public class SafeInInterceptor extends EmptyInterceptor {

private final static Pattern pattern = Pattern.compile("[^\\s]+\\s+in\\s*\\(\\s*\\?[^\\(]*\\)", Pattern.CASE_INSENSITIVE);

private final static int IN_CAUSE_LIMIT = 1000;

@Override

public String onPrepareStatement(String sql) {

return super.onPrepareStatement(this.rewriteSqlToAvoidORA_01795(sql));

}

private String rewriteSqlToAvoidORA_01795(String sql) {

Matcher matcher = pattern.matcher(sql);

while (matcher.find()) {

String inExpression = matcher.group();

long countOfParameters = inExpression.chars().filter(ch -> ch == '?').count();

if (countOfParameters <= IN_CAUSE_LIMIT) {

continue;

}

String fieldName = inExpression.substring(0, inExpression.indexOf(' '));

StringBuilder transformedInExpression = new StringBuilder(" ( ").append(fieldName).append(" in (");

for (int i = 0; i < countOfParameters; i++) {

if (i != 0 && i % IN_CAUSE_LIMIT == 0) {

transformedInExpression

.deleteCharAt(transformedInExpression.length() - 1)

.append(") or ").append(fieldName).append(" in (");

}

transformedInExpression.append("?,");

}

transformedInExpression.deleteCharAt(transformedInExpression.length() - 1).append("))");

sql = sql.replaceFirst(Pattern.quote(inExpression), transformedInExpression.toString());

}

return sql;

}

}

To enable interceptor, - add next property to the your persistence.xml

<property name="hibernate.ejb.interceptor" value="org.kostenko.example.jpa.dialect.SafeInInterceptor" />

Time to test:

@Test

public void transformSelectToSafeIn() throws Exception {

EntityManager em = Persistence.createEntityManagerFactory("myDSTestOra").createEntityManager();

List<Long> idsList = new ArrayList<>();

for (int i = 0; i < 1199; i++) {

idsList.add((long)i);

}

Query q =

em.createQuery("SELECT b FROM OraBlogEntity b WHERE b.id IN (:idsList)", OraBlogEntity.class)

.setParameter("idsList", idsList);

List<OraBlogEntity> blogEntitys = q.getResultList();

System.out.println(blogEntitys.size());

}

Output:

Hibernate:select orablogent0_.id as id1_0_, orablogent0_.body as body2_0_, orablogent0_.title as title3_0_ from orablogentity orablogent0_

where ( orablogent0_.id in (?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?)

or orablogent0_.id in (?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?))

As you can see SQL was splited. No source code changes required and no ORA-01795 anymore.

Source code of described example as usual available on GitHub

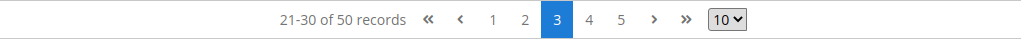

Jakarta EE JPA paging and COUNT(*) OVER()

02 October 2020

Almost all data related applications and UI\UX practices need for paging. Jakarta EE JPA specification helps to do it on backend side by providing simple Query API:

setFirstResult(int startPosition)- Set the position of the first result to retrieve.setMaxResults(int maxResult)- Set the maximum number of results to retrieve.

Typically, to implement paging with JPA you need for two queries: one to select page and second to select total count to calculate count of pages. It works well with simple queries and well described in many articles. But real world enterprise application often enough operates with complex queries with complex filters on big amount of data and unfortunately second query is not for free here from performance point of view.

Fortunately, since JPA 2.1 developers can use function() to call not standard DB functions. Let's play around it to use power of database window functions and JPA usability.

Actually, in case Hibernate JPA provider all we need is register our custom function for our custom dialect like:

public class MyOraDialect extends Oracle10gDialect {

public MyOraDialect () {

super();

registerFunction("countover", new SQLFunctionTemplate(StandardBasicTypes.INTEGER, "count(*) over()"));

}

}

and use dialect above in the our application persistence.xml:

<property name="hibernate.dialect" value="org.kostenko.example.jpa.dialect.MyOraDialect"/>

Looks so easy, - time to test!

public class OraTest {

@Test

public void countOver() throws Exception {

EntityManager em = Persistence.createEntityManagerFactory("myDSTestOra").createEntityManager();

this.generateTestData(em);

Query query = em.createQuery("SELECT b as post, function('countover') as cnt FROM OraBlogEntity b", Tuple.class);

query.setFirstResult(10);

query.setMaxResults(5);

List<Tuple> tpList = query.getResultList();

for (Tuple tp : tpList) {

System.out.println(tp.get("post"));

System.out.println("Total:" + tp.get("cnt"));

}

}

...

}

Output:

Hibernate: select * from ( select row_.*, rownum rownum_ from ( select orablogent0_.id as col_0_0_, count(*) over() as col_1_0_, orablogent0_.id as id1_0_, orablogent0_.body as body2_0_, orablogent0_.title as title3_0_ from orablogentity orablogent0_ ) row_ where rownum <= ?) where rownum_ > ?

OraBlogEntity{id=151, title=title7, body=body7}

Total:3003

OraBlogEntity{id=152, title=title8, body=body8}

Total:3003

...

Please, note: count(*) over() construction is not supported by all RDBMS, but in turn supports by Oracle, Postgres, MSSQL Server and others.

Source code of described example as usual available on GitHub

JPA @ManyToOne. Keep separate reference by ID and by Entity

01 October 2020

Some time you may need to keep reference by class and reference by ID for your JPA @Entity. It can be very helpful, for example, to do some default JSON serialization with no risk to stuck with well known N+1 issue. In this case i would like to avoid @OneToMany and @ManyToOne fields serialization by default and use ID reference instead.

So, below is simple example how to do above.

@Entity

@Table(name = "book")

public class Book {

@Id

@GeneratedValue(strategy = GenerationType.IDENTITY)

private long id;

@Column(name = "name")

private String name;

@JoinColumn(name = "author", insertable = false, updatable = false)

@ManyToOne(targetEntity = Author.class, fetch = FetchType.LAZY)

private Author author;

@Column(name = "author")

private long authorId;

...

public void setAuthor(Author author) {

setAuthorId(author.getId());

this.author = author;

}

...

}

Testing time:

public class ManyToOneTest {

@Test

public void relationManyToOneTest() {

EntityManager em = Persistence.createEntityManagerFactory("myDSTest").createEntityManager();

this.generateTestData(em);

List <Book> books =em.createQuery("FROM Book", Book.class).getResultList();

// lazy loading test

for (Book b : books) {

System.out.println("Bookd:" + b.getName());

System.out.println("AuthorId:" + b.getAuthorId());

// lazy load

System.out.println("Author:" + b.getAuthor());

}

// JPQL with direct id reference

books = em.createQuery("FROM Book where authorId = 1", Book.class).getResultList();

// JPQL with author.id reference

books = em.createQuery("FROM Book where author.id = 1", Book.class).getResultList();

}

private void generateTestData(EntityManager em) {

em.getTransaction().begin();

Author author = new Author();

author.setName("A Name");

em.persist(author);

Book book = new Book();

book.setName("Book Name");

book.setAuthorId(author.getId());

//book.setAuthor(author);

em.persist(book);

em.getTransaction().commit();

em.clear();

}

}

Test Output:

...

Hibernate: select book0_.id as id1_2_, book0_.author as author2_2_, book0_.name as name3_2_ from book book0_

Bookd:Book Name

AuthorId:1

# call book.getAuthor():

Hibernate: select author0_.id as id1_0_0_, author0_.name as name2_0_0_ from author author0_ where author0_.id=?

Author:Author{id=1, name=A Name}

PS: book.getAuthor().getId() will not trigger Author lazy loading, but book.getAuthor().getName() will.

Source code of described application available on GitHub